The 8-Year Evolution of AI Face-Swapping «Deepfakes»

AI-generated images and videos, or deepfakes, have seen a surge in development in recent years. In this article we explore that history and the key milestones along the way.

What do the faces in the image above have in common? Nothing. They were all invented by AI—or more precisely, learned by AI from millions of pixel images and then synthesized into results that are hard to tell from real ones.

Generative Adversarial Network (GAN) Technology

Behind this kind of high-quality synthetic imagery is «generative adversarial network» (GAN) technology. Such networks consist of two AI agents: one that generates fake images and one that judges whether an image is real. When the discriminator catches a fake, the generator keeps improving.

In this way both agents grow stronger during training. Eventually the generator can produce synthetic images that humans can barely distinguish from real ones.

Not All GANs Are the Same

In practice, the output of the original GAN and that of current GAN variants are quite different.

Ian Goodfellow and the Development of GANs

Ian Goodfellow, who recently became Apple’s head of AI, once posted on Twitter about the development of deepfake technology over the years. He is widely credited as the inventor of the GAN process.

Here’s a look at how GANs have advanced in face generation over those four and a half years:

A Brief History of GANs

The papers linked by Goodfellow show how deepfake technology has advanced rapidly with new AI architectures, large-scale datasets, and greater compute.

2014: The Birth Year of Deepfake

Goodfellow and colleagues published the first scientific paper introducing GANs, marking the birth of GAN AI. It was GANs that eventually led to the deepfakes we know today.

As early as 2014 there were signs that GANs could generate highly realistic faces.

2015: GANs Level Up

Researchers began combining GANs with convolutional neural networks (CNNs) tuned for image recognition. CNNs can process large amounts of data in parallel and run very efficiently on GPUs. This combination replaced simpler GAN agent-driven networks and raised the credibility of generated results to a new level.

The more complex the convolutional network, the more convincing the synthetic faces. But in 2015, photorealistic images had not yet appeared.

2016: Deepfake Glasses and Face Manipulation

Researchers coupled two GANs so that agents in different networks could share information, enabling parallel learning.

Each agent slightly modified the training data. For example, one could generate faces with and without sunglasses. By then, generated faces were more believable, but «obviously fake» results were still common.

With coupled GANs, synthetic faces could wear sunglasses or jewelry, but the faces themselves still had many flaws and the «obviously fake» problem remained.

2017: NVIDIA’s Quality Leap and the First Deepfake Video

NVIDIA researchers addressed a major limitation of earlier GANs and achieved a big leap in quality:

The lower the resolution, the harder it was for the discriminator to tell real from fake, so generators tended to produce blurry images—clearer meant more mistakes. AI turned out to be quite cunning.

NVIDIA’s solution was to train the network in stages: first the generator learned to create low-resolution images, then resolution was gradually increased.

Step by step, GANs gained high-resolution generation capability.

GANs trained this way began to produce synthetic faces of unprecedented quality. Though still imperfect, they were hard to tell apart at a glance.

Faces generated in 2017 were far ahead of what came before; some were genuinely hard to distinguish from real ones.

While NVIDIA kept improving its GANs, Reddit user «deepfakes» was bringing the technology to the mainstream. In fall 2017 the first image named «deepfakes» appeared—a pornographic image that face-swapped a celebrity onto an adult performer.

The Trouble with Pornographic Abuse

Since then, «deepfake» has become a catch-all term for AI-generated images and video. The «deep» refers to the many layers in the neural network—deep learning for image synthesis.

Deepfake porn also had serious «obviously fake» issues, but with very low production cost, huge numbers of users flocked to Reddit and other platforms to watch these crude, uncanny videos. Celebrity faces like Scarlett Johansson’s were frequently used, in what was later called a «dark wormhole» of the internet.

2018: Stronger GAN Control and Deepfake on YouTube

Amid this wave, NVIDIA researchers again improved GAN control: they could adjust single image attributes, such as «black hair» or «smile» in a portrait.

This allowed features from training images to be transferred in a targeted way into AI-generated images. The approach was called «style transfer» and became a key part of many later AI projects.

Style transfer could control image AI—for example, generating only smiling faces.

GANs of course are not limited to faces; the AI doesn’t care what pixels it outputs, only that it has the right training data. In late 2018, DeepMind demonstrated AI-generated food, scenery, and animals that looked impressively realistic.

Deep Video Portrait used GANs to improve video processing, and the first YouTube channel focused on deepfakes appeared: not just fake porn, but «deepfaked» politicians and Hollywood stars. People began asking whether AI could «resurrect» deceased actors.

Meanwhile, deepfake porn began to decline: in Q1 2018, Pornhub, Twitter, Gfycat, and Reddit banned such content. Many popular deepfake app sites went offline.

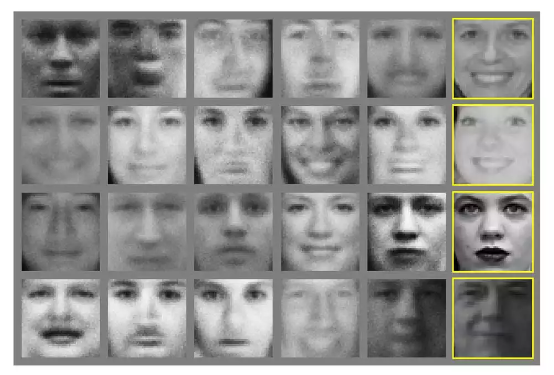

FaceShifter (rightmost image) could turn blurry source images into plausible fakes, outperforming the then state-of-the-art deepfake algorithm FSGAN (second from right).

Deepfakes Work Well—Disney Uses Them Too

Entertainment giant Disney began developing deepfake technology for filmmaking, and the first megapixel-scale deepfake tool was born, generating 1024×1024 images—far beyond tools like DeepFaceLab’s 256×256. Even in early 2021, DeepFaceLab 2.0 maxed out at 448×448.

In the long run, Disney’s deepfake tech may replace traditional VFX pipelines and avoid the months of rendering that short clips used to require.

Disney fans are eager for it. The Star Wars series The Mandalorian hadn’t yet used the megapixel deepfake feature, but fan deepfakes on YouTube were already rivaling Disney’s own CGI.

2021: Deepfake Tours, Live Streams, and Face Licensing

The year started with a Tom Cruise deepfake video. Its TikTok debut was so convincing that only careful study revealed flaws. It went viral and the «Deeptomcruise» channel quickly gained hundreds of thousands of followers. Creator Chris Umé, a VFX specialist, said each video took weeks to make.

Soon after, the Wombo AI app took the internet by storm: a few taps could turn any photo into a short clip of that person singing a famous song. Wombo learned from real performers and matched the photo face to the original singer’s expressions.

WOMBO AI is really impressive.

Disney also hired a well-known deepfake YouTuber, fueling speculation that more deepfake characters would appear in its shows. The late-2021 release of The Book of Boba Fett confirmed some of that.

Deepfakes in Social and Mass Media

Besides Disney, Bruce Willis’s face appeared in a Russian commercial. A startup licensed his likeness and used deepfake tech for the ad. NVIDIA released Alias-Free GAN in 2021, an improved StyleGAN2 with more consistent results under viewpoint changes. Months later, StyleGAN3 was released.

DeepFaceLab’s creators first showed DeepFaceLive in 2021. With proper training or pretrained models, it could swap faces in real-time video—but required a high-end GPU fit for AAA games.

In 2021, so-called diffusion models first matched GAN image quality. Though not yet used for deepfake, they became the basis of OpenAI’s GLIDE image generator by year’s end.

2022: 3D GANs, DALL-E 2, and the Zelenskyy Deepfake

In January, two more impressive GAN advances appeared. Tel Aviv researchers demonstrated a StyleGAN2 variant that could easily manipulate faces in short clips—e.g., adding a smile or slimming the face—without extra training.

NVIDIA and Stanford researchers presented EG3D (Efficient Geometry-aware 3D GAN), allowing AI to generate consistent 3D-style images of people (or cats) from different viewpoints.

Conversely, 3D GANs could reconstruct 3D models from a single real image. So EG3D fakes were more convincing because they stayed consistent across views.

In 2022, Stanford Internet Observatory researchers found over 1,000 suspicious fake profiles on LinkedIn in a two-week study. More than 70 companies had verified them as real; many were treated as promising leads. When outreach worked, a human would step in under the fake identity.

The Russia–Ukraine conflict also produced a historic deepfake moment.

A video showed a synthetic President Zelenskyy urging Ukrainians to lay down their arms. Low resolution and poor quality limited its impact. There’s no definitive proof it was AI-generated, but many outlets and experts treated it as a deepfake.

In April 2022, OpenAI released DALL-E 2, a system that generates images from text. The full rollout was expected in summer 2022.

DALL-E 2 and its diffusion models were not used for deepfake, and OpenAI explicitly bans generating faces. Still, the technology will likely raise the bar for synthetic imagery.

Summary

When GAN inventor Goodfellow first presented his work in 2014, he likely didn’t expect it to fuel such rapid progress in AI-generated imagery. He has since warned that in the future, people will no longer be able to take images and videos on the internet at face value.

Eventually, even the best anti-deepfake algorithms may fail to detect the latest fakes, with disruptive effects across social, entertainment, and other domains. Deepfake researcher Hao Li has said this is no overreaction: images are just pixels with the right colors—it may only be a matter of time before AI finds the perfect arrangement.

As deepfakes spread on YouTube, Reface, Impressions, and elsewhere, synthetic imagery will permeate daily life. Humans once learned to form opinions in an age without video or photos; that clarity may now be obscured by new technology. As Goodfellow put it, «In that sense, AI may be blinding our generation’s eyes to the world.»